Blog

AppBlock Keeps Showing a Blank White Screen Here’s What Actually Fixed It

So there I was, three days into a digital detox experiment, feeling pretty good about myself. I had AppBlock running on my Android phone blocking social media from 9 PM to 7 AM — and it was actually working. Then one morning I opened the app to check my schedule, and instead of the dashboard, I got a completely white, blank screen. Nothing. Just white.

I tapped around. Closed and reopened. Restarted the phone. Still blank. I figured I’d accidentally done something during an update, so I started digging. What I eventually found is a specific bug that shows up for a lot of AppBlock users — tied to something called the MobileSoft FileProvider cache — and it’s not obvious at all unless you know where to look.

This post is basically what I wish I had found when I was searching. No fluff, just what the problem actually is and how to fix it properly.

How the blank screen problem started for me

I’d been using AppBlock — made by MobileSoft — for about two months at this point. It’s a solid app for restricting your own phone usage. You set profiles, define blocked apps or websites, and it enforces those rules even if you try to disable it (there’s a “strict mode” that really locks you out).

The blank white screen appeared right after I’d updated the app through the Play Store. The update process seemed normal — no error messages, no warnings. But the next time I launched AppBlock, the main content area just didn’t render. The top nav bar showed up faintly, and I could sometimes see the loading spinner for a second, but then: white. Pure, empty, maddening white.

Weirdly, the app was still working in the background. My blocks were still active. I couldn’t access Instagram at the scheduled time. So the blocking logic was fine — I just couldn’t see or manage anything through the interface.

“The app was technically doing its job — blocking apps perfectly — I just had absolutely no way to control it or see what was going on.”

That’s what made this so frustrating. The app wasn’t broken in the traditional sense. It was more like the dashboard window had been painted over.

What’s actually going on under the hood

After a lot of Reddit threads, a few Stack Overflow posts, and one very useful GitHub issue, I pieced together what happens.

AppBlock uses something called a WebView internally — basically a mini web browser embedded inside the app — to display certain parts of its interface. The settings screens, the usage stats graphs, some of the dashboard elements — these are rendered as HTML inside that WebView rather than as native Android UI components.

This approach is actually really common. Lots of apps do this because it lets developers build one interface that works across platforms, or update the UI without pushing a full app update. The user never knows they’re looking at HTML in an app — it usually just looks like a regular screen.

The problem is that WebViews cache content locally. And when something goes wrong with that cache — a corrupted file, a leftover chunk from an old version, a conflict after an update — the WebView can fail to load its content and show a blank page instead of an error message. It’s the Android equivalent of a browser tab loading a blank white page when the HTML file it was pointing to has gone missing or become unreadable.

The FileProvider and the cache folder — explained simply

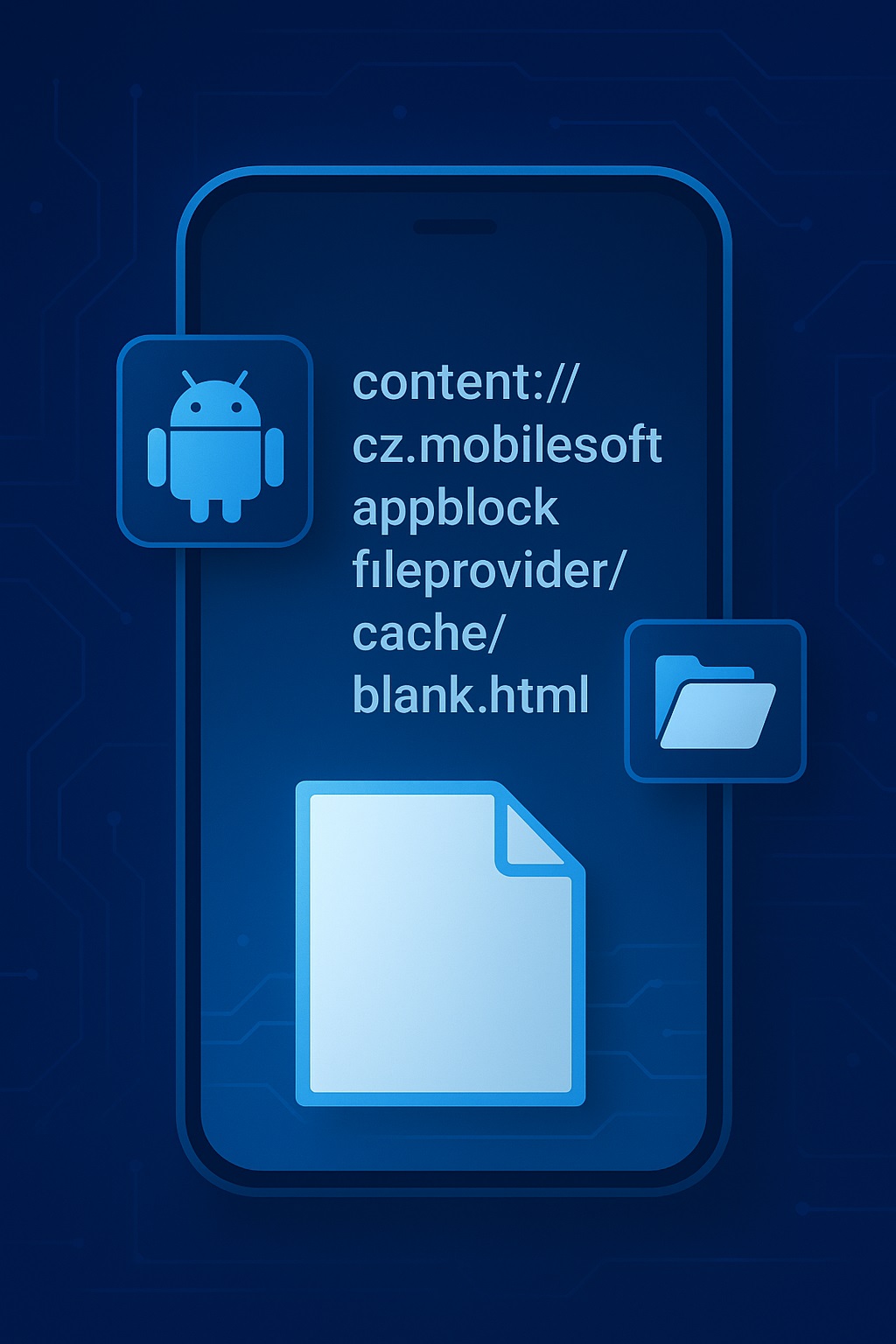

Here’s where the term you’ve probably seen — content://cz.mobilesoft.appblock.fileprovider — comes in. Let me break that down without the jargon.

In Android, apps can’t just freely read each other’s files. There’s a security system in place. When one part of an app (or another app) needs to access a file, it goes through something called a FileProvider — think of it like a secure front desk at a building. You don’t walk into the back office directly; you hand your request to the receptionist, who fetches the file and hands it back to you.

Don’t just clear ALL app data thinking it’ll be a quick fix. If you have strict mode enabled, clearing all data can lock you out of your own settings in weird ways. Clear cache only first — I’ll walk you through that below.

Step-by-step fix that actually worked

Here’s exactly what I did, in order. I’d suggest following these in sequence — start with the gentlest fix and only go further if needed.

- 1Open your Android Settings app (not AppBlock — your phone’s system settings).

- 2Go to Apps (sometimes called “Applications” or “App Management” depending on your phone brand).

- 3Find and tap AppBlock in the list.

- 4Tap Storage & Cache (or just “Storage” on older Android versions).

- 5Tap Clear Cache — NOT “Clear Storage” or “Clear Data” yet. Just the cache.

- 6Reopen AppBlock. Check if the screen loads now.

This fixed it for about 60% of people based on what I’ve read in the AppBlock community. The blank HTML file sitting in the WebView cache gets wiped and the app rebuilds it fresh on the next launch.

If you’re still getting a white screen after doing this, move on to Fix 2.

- 1Go back to Settings → Apps → AppBlock.

- 2Tap Force Stop first. Wait 5 seconds.

- 3Now tap Clear Cache again.

- 4Restart your phone completely (full reboot, not just screen-off).

- 5Launch AppBlock fresh after the restart.

The force stop matters because sometimes the app keeps a background process running that holds onto the corrupted cache files even while you’re trying to clear them. Stopping it first ensures nothing is locking those files.

- 1Go to Settings → Apps and look for Android System WebView. You may need to enable “Show system apps” in the menu to find it.

- 2Tap Storage → Clear Cache for the WebView component itself.

- 3Also check if there’s an update available for Android System WebView in the Play Store. Outdated WebView versions cause rendering bugs.

- 4Restart your phone and open AppBlock.

- 1Before uninstalling: If you have a AppBlock Pro subscription, make sure it’s tied to your Google account — it should restore automatically after reinstalling.

- 2If you’re on strict mode, you may need to temporarily disable it first (or wait for the time window to expire). Strict mode is designed to prevent uninstalling during active sessions.

- 3Uninstall AppBlock completely from Settings → Apps → AppBlock → Uninstall.

- 4Reinstall from the Play Store fresh. All cached files are wiped in a full uninstall.

Mistakes I made before I figured it out

I spent about two hours going in circles before landing on the actual fix. Here’s what didn’t work and why — so you don’t waste your time the same way:

Restarting the phone 4 times in a row expecting something to change. Without clearing the cache, restarts don’t touch the problem files.

One restart after clearing cache. That’s the sequence that matters — not repeated restarts alone.

Immediately clearing all app data (storage). This can wipe your block profiles and schedules, which you’d have to rebuild from scratch.

Clear cache only first. Your settings and profiles are usually stored separately from the cache and survive a cache wipe.

Updating AppBlock without also checking if Android System WebView is current. Both need to be in sync.

After any AppBlock update, open the Play Store and search for “Android System WebView” to check if it also needs an update.

Reporting the bug as “app is broken” and leaving a 1-star review before trying any of these steps. The fix is often simple.

Try all four fixes above first. If none work, then contact MobileSoft support — they’re actually responsive.

How to stop this from happening again

Once I understood what caused the problem, I started doing a couple of things differently to prevent it from recurring:

Maintenance habits that actually help

- Keep Android System WebView updated. Go to the Play Store, search “Android System WebView,” and tap Update if it shows. Do this every month or two, or whenever you update AppBlock.

- Clear AppBlock’s cache monthly. It takes 10 seconds and prevents the slow buildup of stale cached HTML files. Settings → Apps → AppBlock → Storage → Clear Cache.

- Don’t run AppBlock updates while in the middle of a strict mode session if you can help it. The update mid-session seems to be what triggered the issue for me — the new HTML files conflict with whatever the running session cached.

- Enable auto-updates for system apps separately from user apps. WebView is technically a system component but updates via the Play Store — it doesn’t always auto-update on all devices.

I also found that the issue was more common on phones running Android 12 and 13 than on Android 14. Not sure exactly why, but I’ve seen this mentioned in a few places. If you’re on an older Android version, it might be worth checking whether your phone manufacturer has released any system updates.

One more thing: if you’re using a Samsung device specifically, Samsung sometimes uses its own WebView implementation rather than the standard Google one. On Samsung phones, you might also want to check the cache for Samsung Internet even if you don’t use it as your browser — it handles some WebView rendering on certain Samsung builds.

Final thoughts

The blank HTML screen bug is annoying, but it’s not a sign that AppBlock is poorly made — this kind of WebView caching issue can happen to any app that uses web-rendered UI components. The app itself (the blocking engine) kept working perfectly through all of this. That’s actually a sign the core is solid.

If you’re still stuck after trying everything above, MobileSoft does have a support email and they’ve historically responded within a day or two. Sometimes the issue is specific to a device model or Android build that they need to patch on their end.

But for most people reading this, clearing the cache — and making sure Android System WebView is up to date — will be all it takes. It’s one of those fixes where you feel slightly embarrassed it took so long to find, but then relieved it was so simple in the end.

Blog

Mitchell Besser: The Physician Who Built a Global Social Innovation Movement

When people think about innovation, they often picture Silicon Valley founders, disruptive technologies, or venture-backed startups. Yet some of the most transformative innovations emerge from entirely different environments. The story of Mitchell Besser is a powerful example. As a physician working on the front lines of the HIV epidemic in South Africa, Besser identified a problem that medicine alone could not solve. His response ultimately became a globally recognized social enterprise model that has improved millions of lives.

What makes Mitchell Besser’s journey particularly compelling for entrepreneurs and startup founders is that it mirrors many of the principles behind successful business innovation. He identified a gap in the market—or in this case, the healthcare system—designed a scalable solution, validated it in real-world conditions, and expanded it across multiple countries. His work demonstrates that innovation is not limited to technology products; it can also reshape how societies address some of their most pressing challenges.

Who Is Mitchell Besser?

Dr. Mitchell Besser is a Harvard-trained obstetrician and gynecologist whose career has focused on improving women’s health and public health outcomes. In 1999, he joined the University of Cape Town’s Department of Obstetrics and Gynecology, where he worked on programs aimed at preventing mother-to-child transmission of HIV. During this period, he witnessed a troubling pattern. Many pregnant women who tested positive for HIV received medical treatment options but failed to return for follow-up care due to fear, stigma, isolation, and emotional distress.

Besser quickly realized that the challenge was not purely medical. Clinics could provide medications, but they could not always provide the trust, understanding, and emotional support that patients desperately needed. This insight became the foundation for one of the most successful social health innovations of the 21st century.

The Birth of mothers2mothers

In 2001, Mitchell Besser founded mothers2mothers (m2m) in Cape Town, South Africa. The concept was simple yet revolutionary. Instead of relying solely on healthcare professionals, the organization employed women living with HIV who had successfully navigated treatment and motherhood. These women, known as Mentor Mothers, would support other pregnant women facing similar circumstances.

The idea challenged conventional healthcare delivery models. Rather than treating patients as passive recipients of care, mothers2mothers empowered them to become active participants and leaders within their communities.

The organization began at a single hospital site. From those humble beginnings, it expanded into a multinational operation serving communities across Africa. Today, mothers2mothers is widely recognized as a pioneering example of peer-to-peer healthcare support.

Why Mitchell Besser’s Approach Worked

Many health interventions fail because they focus exclusively on clinical outcomes while overlooking human behavior. Besser understood that information alone does not necessarily change decisions.

When women received an HIV diagnosis, fear often became a barrier to treatment. Mentor Mothers could bridge that gap because they had lived through the same experience. They provided something healthcare systems frequently struggle to deliver: credibility born from shared experience.

This model created several advantages:

| Traditional Healthcare Model | mothers2mothers Model |

| Expert-to-patient communication | Peer-to-peer support |

| Clinical focus | Clinical and emotional support |

| Limited community engagement | Deep community integration |

| Patients as recipients | Patients as mentors and leaders |

| Often resource-intensive | Highly scalable and cost-effective |

For startup founders, this lesson is remarkably familiar. Successful products often emerge when organizations deeply understand user behavior rather than simply delivering features. Mitchell Besser applied a similar principle within public health.

Scaling a Social Enterprise

One of the most impressive aspects of Besser’s work is not the original idea itself but its scalability.

Many nonprofit initiatives achieve local success yet struggle to grow. mothers2mothers avoided this trap by creating a repeatable model that could be adapted across different communities and healthcare systems.

Over time, the organization expanded throughout sub-Saharan Africa and reached millions of women, children, and families. It also created employment opportunities for thousands of women living with HIV, transforming beneficiaries into contributors and leaders.

This ability to scale mirrors the challenges faced by technology startups. Growth requires operational systems, leadership development, funding strategies, and a clear mission. Besser’s success demonstrates that the same entrepreneurial principles apply whether you are building software or addressing public health crises.

Leadership Lessons from Mitchell Besser

Entrepreneurs often look to technology founders for leadership inspiration, but Mitchell Besser’s career offers equally valuable insights.

The first lesson is the importance of listening. Rather than imposing a solution from the top down, he observed the realities patients faced and built a model around their lived experiences.

The second lesson is recognizing hidden assets. Many organizations focus on what they lack. Besser focused on what was already available. He saw HIV-positive mothers not as vulnerable patients but as an untapped resource capable of helping others.

The third lesson involves mission-driven innovation. While profitability drives many startups, the most enduring organizations often pursue a larger purpose. Besser’s work demonstrates how a compelling mission can attract talent, partnerships, funding, and long-term commitment.

Beyond HIV Prevention

Although mothers2mothers initially focused on preventing mother-to-child transmission of HIV, the organization’s impact expanded significantly over time.

The model evolved into a broader platform supporting maternal health, child health, adolescent services, family wellness, and community healthcare initiatives. By leveraging trusted peer relationships, the organization demonstrated that its methodology could address multiple health challenges beyond its original purpose.

This evolution reflects another important startup principle: successful organizations rarely remain fixed on their initial use case. They discover adjacent opportunities and expand based on proven strengths.

Mitchell Besser did not simply create a program. He created a framework capable of adapting to changing needs.

The Launch of AgeWell Global

Besser’s entrepreneurial journey did not stop with mothers2mothers.

In 2014, he launched AgeWell Global, applying many of the same peer-support principles to aging populations. The organization trains and employs older adults to support fellow seniors, helping combat loneliness, improve well-being, and promote healthier aging.

This transition highlights a hallmark of visionary entrepreneurs: the ability to transfer successful models across different industries and challenges.

The underlying insight remained the same. Human connection can be one of the most powerful tools for improving outcomes. Whether supporting mothers living with HIV or older adults facing isolation, peer engagement creates trust that institutions alone often struggle to establish.

For founders, this demonstrates how core innovations can often be adapted to entirely new markets.

Recognition and Global Influence

Over the years, Mitchell Besser and mothers2mothers have received significant international recognition. The organization’s innovative approach has earned awards from major institutions and attracted support from global health leaders, foundations, and governments. Besser himself has been honored for his contributions to healthcare innovation and social entrepreneurship.

In 2023, the University of Cape Town awarded him an honorary doctorate, recognizing the profound impact of his work on society and global health.

Such recognition reflects more than personal achievement. It highlights the growing appreciation for solutions that combine medical expertise, community empowerment, and entrepreneurial thinking.

Why Mitchell Besser Matters Today

The story of Mitchell Besser remains highly relevant in today’s innovation landscape.

Businesses, governments, and nonprofits increasingly recognize that many challenges cannot be solved through technology alone. Human-centered design, behavioral insights, and community engagement are becoming essential components of effective solutions.

Besser understood this long before it became a popular management philosophy. He recognized that people are not simply users, patients, or customers. They are partners in the process of creating meaningful change.

His work offers a blueprint for entrepreneurs seeking to build organizations with lasting impact. It demonstrates how empathy can become a competitive advantage, how community can become infrastructure, and how purpose-driven innovation can achieve extraordinary scale.

Conclusion

Mitchell Besser’s legacy extends far beyond medicine. He transformed a healthcare challenge into a global movement by identifying a simple but powerful truth: people who share experiences can help one another in ways institutions often cannot.

Through mothers2mothers and later AgeWell Global, he proved that innovation does not always require groundbreaking technology. Sometimes it requires a fresh perspective on human relationships and an unwavering commitment to solving real-world problems.

For startup founders, entrepreneurs, and business leaders, Besser’s journey serves as a reminder that the most impactful innovations often emerge when expertise meets empathy. His work continues to inspire a new generation of leaders who believe that scalable solutions can improve lives while transforming entire systems in the process.

Blog

Barbara Boothe: The Private Woman Behind a Tech and Hollywood Legacy

In an era where visibility often seems inseparable from success, Barbara Boothe stands out as a remarkable exception. While many people associated with influential business leaders embrace public attention, Boothe chose a different path. Her story is not one of constant media appearances or personal branding. Instead, it is a story of privacy, resilience, and quiet influence during some of the most transformative decades in technology and entertainment.

Barbara Boothe is widely recognized as the former wife of Larry Ellison, the co-founder of Oracle, and the mother of Hollywood producers David Ellison and Megan Ellison. Yet reducing her identity to those relationships overlooks the broader significance of her life. Her journey offers a unique perspective on the early days of Silicon Valley, the realities of raising ambitious children, and the value of maintaining personal independence in a world obsessed with public recognition.

Who Is Barbara Boothe?

Barbara Boothe entered public awareness largely because of her connection to Larry Ellison during Oracle’s formative years. Before Oracle became one of the world’s most influential software companies, Boothe worked at the company when it was still known as Relational Software Inc., a small startup operating in a rapidly evolving technology landscape. Sources consistently identify her role as a receptionist during this early period, placing her at the heart of a company that would later transform enterprise computing worldwide.

While she was never involved in Oracle’s executive leadership, her presence during the company’s early growth provides a fascinating window into Silicon Valley history. She witnessed firsthand the intensity, uncertainty, and ambition that defined the startup ecosystem long before technology entrepreneurs became cultural icons.

What makes Barbara Boothe particularly intriguing is not her visibility but her absence from the spotlight. Unlike many individuals connected to influential business figures, she has consistently maintained a low public profile, allowing her actions and family legacy to speak louder than public appearances.

Barbara Boothe and the Early Silicon Valley Era

The early 1980s represented a pivotal moment in technology history. Personal computers were beginning to reshape business operations, software companies were emerging as economic powerhouses, and Silicon Valley was evolving into a global innovation center.

Barbara Boothe found herself connected to this transformation during a period when Oracle was still establishing its identity. At the time, few could have predicted that the company would grow into one of the largest enterprise software providers in the world.

Understanding Boothe’s role requires appreciating the environment of the era. Startups operated with small teams, limited resources, and enormous ambitions. Every employee, regardless of position, contributed to the culture and daily operations of the organization. While her role was administrative, she experienced the realities of startup life during a period that would later become legendary in technology history.

Her connection to these early years gives her story relevance beyond celebrity associations. It illustrates how many individuals contribute to major business journeys, even when their names never appear in corporate histories.

Marriage, Family, and a New Chapter

Barbara Boothe married Larry Ellison in 1983, during a critical stage in Oracle’s development. Their marriage lasted until 1986, a period that coincided with the company’s growing momentum and increasing visibility.

Although their relationship eventually ended in divorce, it produced two children who would go on to achieve extraordinary success in their own right.

Rather than remaining tied to the public narrative surrounding Oracle and Silicon Valley, Boothe largely stepped away from media attention after the divorce. This decision would become a defining characteristic of her life.

Her focus shifted toward family and creating a stable environment for her children. While little has been publicly documented about her parenting philosophy, the accomplishments of David and Megan Ellison suggest a household that encouraged independence, creativity, and ambition.

The Hollywood Connection

One of the most compelling aspects of Barbara Boothe’s legacy is reflected in the achievements of her children.

David Ellison became the founder and CEO of Skydance Media, a production company responsible for major film and television projects. Under his leadership, the company has become a significant force in modern entertainment, producing blockbuster franchises and partnering with some of Hollywood’s most influential studios.

Megan Ellison took a different but equally impactful path. As the founder of Annapurna Pictures, she established a reputation for supporting artistically ambitious and critically acclaimed films. Her company became known for backing projects that challenged conventions and elevated independent storytelling.

The success of both siblings demonstrates an interesting contrast. While their father helped build one of the world’s most influential technology companies, they built careers in the creative arts. Their accomplishments suggest an upbringing that valued exploration and personal passion rather than simply following established paths.

Family Legacy at a Glance

| Family Member | Primary Field | Notable Contribution |

| Barbara Boothe | Private Life & Family Leadership | Maintained a private life while raising successful children |

| Larry Ellison | Technology | Co-founded Oracle and helped shape enterprise software |

| David Ellison | Entertainment | Founded Skydance Media and produced major film franchises |

| Megan Ellison | Entertainment | Founded Annapurna Pictures and championed independent cinema |

This combination of technology, entrepreneurship, and creative achievement makes the family’s story particularly unique.

Life Beyond Public Attention

One of the most fascinating elements of Barbara Boothe’s story is her commitment to privacy.

In today’s digital environment, public figures and their families often face intense scrutiny. Social media, online publications, and constant news cycles make it increasingly difficult to maintain personal boundaries. Yet Boothe has consistently chosen a different approach.

Rather than building a public persona, she appears to have prioritized personal fulfillment and family life. Reports have associated her with equestrian pursuits and ownership interests connected to Wild Turkey Farm, reflecting interests far removed from the corporate spotlight.

This deliberate distance from public attention has contributed to the enduring curiosity surrounding her life. Ironically, her refusal to seek fame has made people even more interested in understanding her story.

Lessons Entrepreneurs Can Learn from Barbara Boothe

Although Barbara Boothe is not known as a startup founder or corporate executive, her story contains valuable lessons for entrepreneurs and business leaders.

First, influence is not always visible. In business culture, attention often gravitates toward CEOs, founders, and public personalities. However, many successful individuals are shaped by supportive family members, mentors, and partners whose contributions remain largely unseen.

Second, success takes many forms. Modern entrepreneurship frequently equates achievement with valuation metrics, funding rounds, and public recognition. Boothe’s life offers an alternative perspective. Personal fulfillment, family impact, and maintaining one’s values can be equally meaningful measures of success.

Third, privacy has value. In a world where personal and professional boundaries increasingly blur, her example highlights the benefits of maintaining control over one’s narrative. Not every accomplishment needs public validation.

Finally, long-term legacy often emerges through people rather than institutions. While companies rise and fall, the influence we have on future generations can create impacts that extend far beyond business success.

Why Barbara Boothe Continues to Fascinate People

Public interest in Barbara Boothe persists because her story sits at the intersection of several influential worlds. She was connected to the rise of Silicon Valley during a formative era, witnessed the growth of Oracle from its early stages, and became the mother of two influential figures in modern Hollywood.

Yet unlike many individuals connected to wealth and power, she never pursued public recognition. This contrast creates a compelling narrative that resonates with readers today.

Her life reminds us that history is often shaped not only by the people whose names dominate headlines but also by those who quietly influence events from behind the scenes. In an age of constant self-promotion, that perspective feels increasingly rare.

Conclusion

Barbara Boothe remains one of the more intriguing figures connected to both technology and entertainment. Her life reflects a unique blend of proximity to extraordinary success and a steadfast commitment to privacy. While much of the public attention surrounding her stems from her connection to Larry Ellison and her accomplished children, her story ultimately stands on its own.

She witnessed the early rise of a technology giant, navigated life during Silicon Valley’s formative years, and helped raise two individuals who would leave their own mark on Hollywood. Yet throughout these experiences, she maintained a personal identity separate from fame and public scrutiny.

For entrepreneurs, founders, and professionals, Barbara Boothe’s story serves as a reminder that influence is not always measured by visibility. Sometimes, the most meaningful legacies are built quietly, through values, relationships, and the people we help shape along the way.

Blog

Latane Brown: The Untold Story Behind a NASCAR Legacy

When people discuss the history of NASCAR, conversations almost inevitably lead to the legendary career of Dale Earnhardt. His accomplishments on the track transformed him into one of the most recognizable figures in American motorsports. Yet behind many public figures are individuals whose stories remain largely untold. One such person is latane brown, a woman whose connection to Earnhardt placed her in the orbit of fame while she largely chose a life away from the spotlight.

In an era when personal lives are often documented across social media and entertainment platforms, the story of Latane Brown stands out because of its privacy. While countless biographies have examined Earnhardt’s racing achievements, relatively little attention has been given to the people who shared important chapters of his life. Understanding Latane Brown offers a more complete picture of the personal history surrounding one of NASCAR’s most influential families.

Who Was Latane Brown?

Latane Brown is most widely recognized as the first wife of Dale Earnhardt. The two married as teenagers during a period when neither could have predicted the enormous fame that would eventually follow Earnhardt’s racing career. Their relationship began long before championship trophies, sponsorship deals, and national recognition entered the picture.

Their marriage reflected a different chapter in Earnhardt’s life—one characterized by youth, ambition, and uncertainty. At the time, he was still developing his identity and pursuing opportunities that would later propel him into NASCAR history. Brown was part of that foundational period, supporting a young man who had not yet become a motorsports icon.

Unlike many individuals connected to famous athletes or celebrities, Brown maintained a remarkably low public profile. This decision has contributed to the intrigue surrounding her life and explains why many people continue searching for information about her decades later.

The Early Relationship Between Latane Brown and Dale Earnhardt

The story of Latane Brown and Dale Earnhardt began in their teenage years. They married in 1968, a time when both were still very young and navigating adulthood. Their relationship represented the challenges and aspirations common among many young couples of the era.

Shortly after their marriage, the couple welcomed a son, Kerry Earnhardt. The arrival of a child brought additional responsibilities and pressures. Like many young families, they faced the realities of balancing personal goals, financial obligations, and family life.

Although their marriage eventually ended, it remains an important chapter in the Earnhardt family story. The relationship contributed to the foundation of a family legacy that would later become deeply connected to NASCAR history.

Latane Brown’s Role in the Earnhardt Family Legacy

One reason interest in latane brown remains strong today is her connection to the broader Earnhardt family legacy. Through her son Kerry Earnhardt, Brown became part of a lineage that would continue influencing motorsports for generations.

The Earnhardt name grew into one of the most respected brands in racing. While much of the public focus remained on Dale Earnhardt and later his children and grandchildren, Brown’s place in that family history remains significant. She was present at the beginning of a story that would ultimately become part of American sports culture.

Family histories often focus on the most visible figures, but those who help shape early chapters can be equally important. Brown’s contribution to that legacy comes not through public appearances or media interviews but through her role in the family’s origins.

Why Latane Brown Chose Privacy

One of the most remarkable aspects of Latane Brown’s story is her commitment to privacy. In today’s media environment, individuals connected to celebrities frequently embrace public attention. Brown took a different path.

Very little information exists regarding her professional career, personal interests, or day-to-day life. Public records and historical references reveal only limited details. This absence of publicity appears to have been a deliberate choice rather than a consequence of circumstance.

Her decision highlights an important reality about fame: not everyone associated with a public figure seeks visibility. While celebrity culture often rewards exposure, some individuals prioritize personal relationships, family, and privacy over public recognition.

This choice may explain why Brown continues to generate curiosity. In a world where information is readily available, mystery becomes increasingly rare.

Key Facts About Latane Brown

The following table summarizes some of the most widely known information about Latane Brown.

| Category | Information |

| Known For | First wife of Dale Earnhardt |

| Marriage Year | 1968 |

| Children | Kerry Earnhardt and later another child from a subsequent marriage |

| Public Profile | Extremely private |

| Connection to NASCAR | Through the Earnhardt family |

| Historical Significance | Part of the early Earnhardt family story |

The limited public information surrounding Brown has made her a figure of ongoing interest among NASCAR historians and fans seeking to understand the people behind the sport’s biggest names.

The Human Side of Fame

Stories like that of latane brown reveal something larger about fame itself. Public attention often focuses on achievements, championships, and records. Yet every successful public figure is also part of a network of personal relationships that shape their journey.

Before Dale Earnhardt became “The Intimidator,” he was a young husband and father trying to build a future. Brown was present during those formative years, witnessing the struggles and ambitions that preceded widespread success.

This perspective humanizes a sports legend who is often viewed through the lens of statistics and championships. It reminds audiences that behind every iconic career are personal experiences that influence the individual’s development.

For readers interested in leadership, entrepreneurship, or personal growth, this lesson carries practical relevance. Success stories rarely emerge in isolation. Family members, spouses, friends, and mentors often play critical roles in helping individuals navigate uncertainty and pursue ambitious goals.

Public Interest Decades Later

Despite maintaining a low profile, Latane Brown continues to attract attention from researchers, journalists, and racing enthusiasts. Several factors contribute to this enduring interest.

First, the Earnhardt family remains one of the most influential names in NASCAR history. Any individual connected to the family naturally becomes part of that larger narrative.

Second, Brown’s privacy creates an element of intrigue. In contrast to modern celebrity culture, where personal details are frequently shared, her story contains many unanswered questions.

Third, historical interest in sports continues to expand. Fans increasingly seek to understand not only athletic achievements but also the personal histories surrounding iconic figures. As a result, people connected to major sports legends often become subjects of renewed attention years after the events themselves occurred.

Lessons from Latane Brown’s Story

Although Latane Brown never pursued public fame, her story offers several meaningful insights.

One lesson is that significance does not always require visibility. Many people influence history indirectly through relationships, support systems, and personal contributions that never appear in headlines.

Another lesson involves the value of privacy. In a digital age where exposure is often encouraged, Brown’s life demonstrates that individuals can choose a different path. Personal fulfillment does not necessarily depend on public recognition.

Finally, her story underscores the importance of context. Understanding influential figures requires examining the people and circumstances that shaped their early lives. Looking beyond public accomplishments often reveals richer and more nuanced narratives.

The Lasting Relevance of Latane Brown

As interest in NASCAR history continues to grow, the story of latane brown remains an important piece of the larger puzzle. While she may never be as publicly recognized as the racing legend she once married, her place in the Earnhardt family narrative ensures lasting historical relevance.

Brown represents a quieter side of a highly public story. Her life serves as a reminder that history is not only created by those in the spotlight but also by those who influence events from behind the scenes.

For modern audiences, that perspective is increasingly valuable. It encourages a broader understanding of success, legacy, and personal choice. Rather than measuring importance solely through fame or public visibility, Brown’s story highlights the enduring impact of relationships, family, and individual decisions.

Conclusion

The story of latane brown is ultimately one of quiet significance. Known primarily as Dale Earnhardt’s first wife, she occupies a unique place in NASCAR history while remaining one of its most private figures. Her connection to the Earnhardt family, her role in its early years, and her decision to avoid public attention have all contributed to the lasting curiosity surrounding her life.

While many biographies focus on championships and public achievements, Brown’s story reminds us that history is also shaped by the people who stand outside the spotlight. Her legacy may not be defined by fame, but it remains an important chapter in one of America’s most celebrated sports families.

-

Blog4 months ago

Blog4 months agoHHKTHK: Decoding Digital Noise and Strategic Signals in Modern Tech

-

Blog4 months ago

Blog4 months agoLollitip: How Innovative Design is Transforming Consumer Experience

-

Blog4 months ago

Blog4 months agoSimbramento: A New Strategic Model for Scalable Digital Growth

-

Blog4 months ago

Blog4 months agohellooworl: The Mindset Behind Building What the World Hasn’t Seen Yet

-

Blog4 months ago

Blog4 months agoFrom Blog PlayBattleSquare: How Competitive Gaming Platforms Are Shaping the Future of Digital Communities

-

Blog4 months ago

Blog4 months agoCalesshop: Redefining Digital Commerce for Modern Entrepreneurs

-

Blog4 months ago

Blog4 months agoXaicotum: The Emerging Digital Framework Redefining Startup Innovation

-

Blog4 months ago

Blog4 months agoHygienstolar: The Strategic Framework Modern Startups Didn’t Know They Needed